Last updated: April 10, 2026

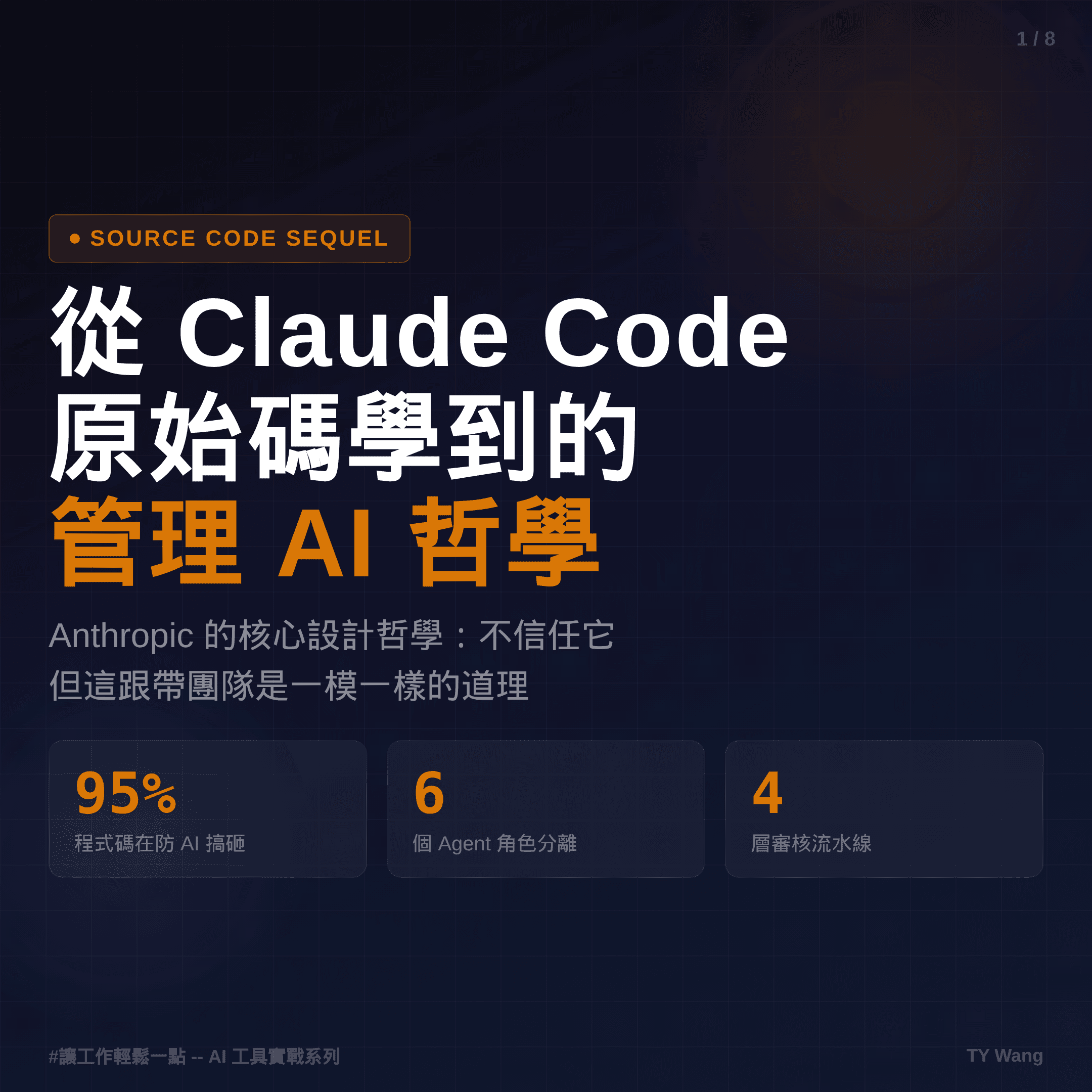

What I actually learned from the Claude Code source leak

The real lesson was not the drama. It was how harness, CLAUDE.md, parallel agents, and context compression shape the product.

TL;DR

Key takeaways first

>The most important lesson from the Claude Code source leak is not gossip, but what it reveals about harness, memory, permissions, and workflow design.

>The incident reinforces that the model is only one layer. Product quality often comes from the operating framework around it.

>For heavy Claude Code users, the most useful response is usually not following the drama, but rewriting their own CLAUDE.md.

The Claude Code source leak looked like gossip on the surface, but the more valuable part for me was what it revealed about how the product is actually operated.

Once 510,000 lines of code and 1,902 files were laid out in the open, the thing that stood out was not simply model strength. It was how much effort Anthropic had put into designing the environment around the model.

1. Less than 5% is really about calling the model

After reading the main analyses, my strongest reaction was simple: the model is not the whole product. The harness is.

If Claude Code is a car, the model is more like the engine. The part that actually lets you drive is everything around it: the brakes, steering, instruments, permissions, memory, and tool orchestration.

That is why products built on the same model can feel wildly different. The gap is often not intelligence. It is the quality of the control layer around the intelligence.

2. CLAUDE.md is not just read once at startup

One of the most actionable discoveries was that CLAUDE.md is not a startup-only note.

The leaked behavior made it clear that the system reloads relevant instructions on every new turn. In other words, this is not decorative documentation. It is a working manual the system keeps consulting.

And it exists in layers:

~/.claude/CLAUDE.mdfor global habits./CLAUDE.mdfor project rules.claude/rules/*.mdfor modular guidanceCLAUDE.local.mdfor private notes that should not go into git

If all you put there is "please answer in Traditional Chinese," you are wasting one of the highest-leverage surfaces in the workflow.

3. The real value of built-in agents is role separation

What impressed me most was not the number of agents. It was how clearly each role was bounded.

The Explore Agent leans read-only. The Plan Agent leans toward structuring work. The Verification Agent leans toward trying to break things. That is much closer to the division of labor inside a mature team than to the fantasy of one brilliant actor doing everything alone.

This is also a reminder that AI workflow failures often come less from weak capability and more from mixed responsibilities. When one system is asked to generate, review, and approve at the same time, optimism bias becomes almost inevitable.

4. Child agents share cache, so parallel work is not pure waste

Another important design choice is how context copies and caching work together.

When the main thread forks multiple child agents, they do not all start from zero. They share a very similar context base. That means parallel work does not necessarily multiply cost the way many people assume.

It also explains why Claude Code often feels more natural in a multi-threaded workflow than in a single long session that tries to carry everything alone.

5. Conversations get compressed, so memory deserves skepticism

Another useful reminder from the leak is that context compression is more aggressive than many users realize.

When the conversation grows long and tokens get expensive, the system preserves certain files and summaries, but not every intermediate detail survives intact. The AI may sound like it remembers more than it actually does.

That is why re-reading files, refreshing context, and compacting intentionally are not signs of paranoia. They are normal maintenance for longer-running work.

6. I rewrote my own CLAUDE.md because of this

The most direct thing I did after reading about the leak was not to keep following the drama. It was to change my own working rules.

I made several instructions much more explicit:

- after editing files, run verification instead of trusting that writes succeeded

- read large files in chunks instead of assuming one pass is complete

- after long conversations, re-read before editing instead of trusting memory

- if search results look too small, suspect truncation and search again

These sound like small rules, but they directly change output quality.

Closing note

The biggest lesson I took from the Claude Code leak is that even very strong AI still depends on a good operating framework.

Reliable AI tools are not built only by upgrading the model. They are built by getting permissions, memory, verification, and tooling to work together as one system.

PS

If the long-term result of this incident is that more people start taking their own CLAUDE.md seriously, then it may end up being an expensive but useful public lesson.